It turns out that launching, losing, finding, and recovering a balloon is way easier than getting the imagery ready. Let’s find out why!

(If you see an image in this post, click it. If you regret it I’ll refund you the price of admission.)

If you’ve been following along you’ll know that the goal of this project was to achieve a world first accomplishment of fully spherical panoramic imagery of a (small) section of our planet as seen from the stratosphere. In addition to imagery I also wanted to put together a video of the whole flight. The video didn’t quite happen as I hoped. Some of the obstacles were too large to overcome using the hardware that I sent up. I’ll hopefully cover those obstacles in this post.

Where to start?

The Beginning

Let’s cover the evolution of the project. The basics have already been covered and you can refresh yourself by reading them again.

Operation StratoSphere – Overview and History

Go ahead, I’ll wait.

Ok, let’s proceed. I started with just one camera and one homemade cube. With the goal being a fully spherical image I had to get creative to use one camera and treat it as if it were six. I made a frame with a guide for the box to go in, then took the whole silly unstable contraption out to the backyard. The guide was there to keep me from moving the cube too drastically from its starting point. I set it in picture every n seconds mode and let it run. After a few frames were captured I picked the cube up, rotated it so that the camera had coverage on another face, and put it back in the guide. Repeat for all six faces.

Some notes on the following links: They all utilize a panorama viewer I put together. I make no claims that it will work (except to say that it does for me). You click and drag to pan the image. On browsers that support it it will use WebGL for rendering, otherwise it will use canvas. Again, on browsers that support it it will allow you to press F to toggle in and out of fullscreen. If your browser doesn’t support this mode, press F11 (or your platform’s equivalent) to cause your browser to fill as much of the screen as possible. The experience is much better when it’s filling the screen.

That ended up giving the following result. It’s not stitched together well and it definitely isn’t pretty, but there are no holes in the image which means there is full (but not necessarily ideal) coverage. The project was feasible!

After that came a lot of research into balloons, stitching better, and building many many boxes to house the cameras. And of course, stitching more images.

Here we have me just holding the box up in the street and rotating it to point the camera in all six directions. Visible also is a little miscreant (my son) trying to chase me into the street.

This one was taken from my parents’ roof with a single camera at the end of a pool cleaning pole.

Through much cajoling, begging, and borrowing I was able to acquire a total of four cameras. I put them all in one of my boxes, grabbed my homemade test tripod, and went searching for a suitable spot to test from. With this example we have something a little different. First, here’s a static image like the previous links have been.

With this test, however, I wasn’t just goofing around and further proving to myself that yes, I can stitch images together! No, this time I was doing a full video test. Let’s see how that turned out, shall we? Please note, this link will not work in a web browser that can’t playback webm video. I have tested and verified that it works on the latest Firefox and Chrome browsers, but will fail in Internet Explorer.

Now we’re getting somewhere! That rather obvious black gap is the two missing cameras. Don’t worry, we’ll fill that in.

One issue that I haven’t addressed yet (really, I haven’t touched on any of them, yet) is that of rotation. As nice as it would be, that camera isn’t going to be stable. Let’s see how it feels when we just view an uncorrected rotating video. Also, all six cameras have been acquired for this test.

It kind of sucks to have to chase the view around, doesn’t it? And that’s just rotating around a nice axis. It’s even worse when it rotates around a non-vertical axis as you can’t pan to follow so easily.

The issues

So, I’ve already pointed out one issue. The camera rotation (for sake of conversation, I’m treating the whole cube as a single camera. It just makes explanations a bit less tedious), that is. If you were looking closely you may have seen another issue. Rotation is our first issue.

Exposure. In the last video it’s pretty obvious that there are some major quality issues going on. Go ahead an watch it again. This time I want you to not pan the view and just pay attention to just right of dead center. You’ll see that that area comes to a corner pointing down. (The cameras are at angles as I’m suspending the box by a corner.) As the camera rotates around that particular face (all faces, really. But we’re concentrating on one for the moment.) is looking at the sun, then as it continues around looking directly opposite the sun, then back around. As it does the camera software tries to adjust the exposure to keep the scene visible. This results in that face not matching the exposure values of its neighbors and during and just after it’s had the sun in view it’s left under exposed as it tried to accommodate the very bright sun. For this test I tried to correct it in post production but you can’t find detail that’s been lost.

Synchronization. Since we’re not dealing with a static scene (we have clouds moving, the balloon ascending/descending, camera rotation, etc) we have to to use images that were taken at the same moment in time and location in space if we want a good stitched result. This means we have to have some method of synchronizing our videos.

The solutions

Exposure. This is the easiest one to explain in that all I have to say is I can’t fix it. Not with the hardware I used. The firmware on the cameras used doesn’t provide a mean to fix the exposure value, so, for this iteration, it just has to be dealt with.

Synchronization. This one didn’t turn out to be too bad. Shortly before the balloon is launched I turn on all of the camera. They don’t all turn on and start recording at the same time, however. So I can’t just assume that 2 minutes into one puts the balloon at the same location as 2 minutes into another.

I tossed around various ideas to solve this problem. Initially I was thinking maybe enclose the payload in a box and flash a flash bulb in the box then synchronize the cameras based on the video. Or a blinking LED in front of each camera. These may have worked but were over-complicated. I wanted something fast or, preferably, something passive.

The way I synchronized early video attempts (such as for the basketball court video) was to bang a rock against a metal pole after the cameras have already started recording. Then, I would look at the audio stream for each camera and find where the sharp impact occurred and use that as the basis for a series of deltas between each video and one common. This worked but wasn’t very accurate. Also, what if I forget to have a sharp impact noise when launching? Further, when a camera hits 4 gigabytes in the video it’s recording it stops recording to that file and starts a new file. In practice this doesn’t transition losslessly and, therefore, I can’t use the sharp impact from the first 45 minutes to help synchronize the second or third 45 minutes of the flight.

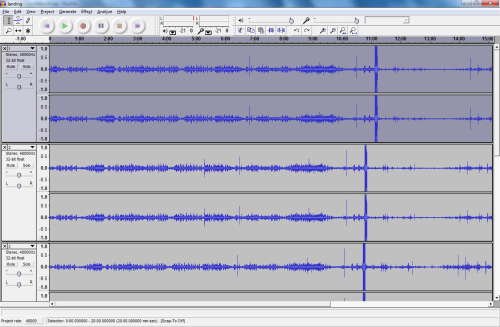

What I ended up going with for the footage you haven’t seen yet (muhaha) is to use a tool called Audacity. Audacity is free, open source, cross-platform software for recording and editing sounds.

Here’s the basic process:

Step 1. Dump the audio from the individual videos.

ffmpeg -i input.mp4 output.wav

Step 2. Load the resulting wave files into Audacity.

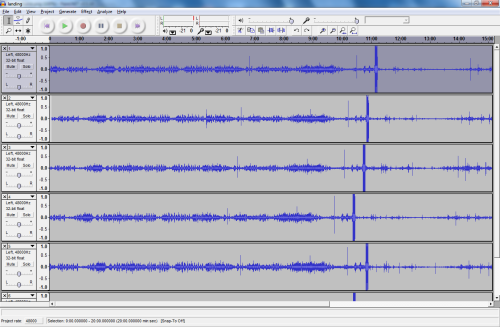

Step 3. Split that stereo track and drop one channel. For what we’re going to do it’s just taking up space.

Step 4. Find a common pattern.

Step 5. Choose one waveform to align to. I use the one that has my chosen feature closest to the start as it makes all the deltas go in just one direction. You can choose whichever you want.

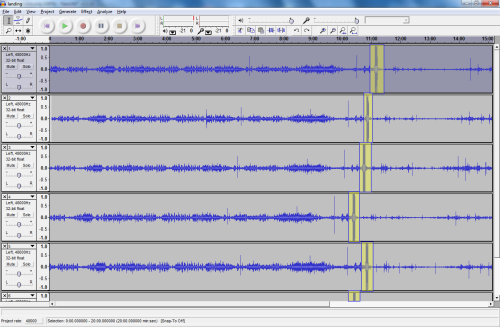

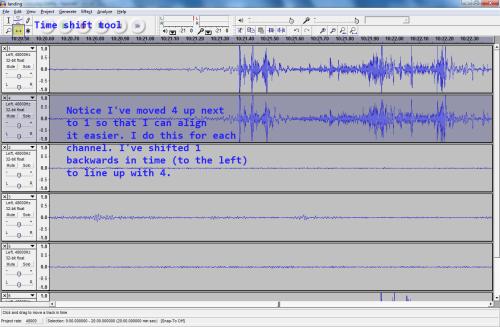

Step 6. Use the time shift tool and the zoom tool to align the wave forms for all of the other channels with the chosen one.

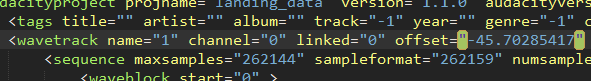

Step 7. Save the Audacity project and open it with a text editor. It’s a simple XML format. What we’re looking for is the offset attribute on the wavetrack tag. Record this value for each channel.

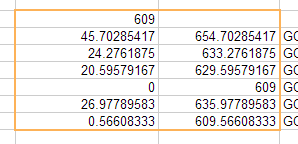

Now you have a list of offsets that you can use to translate one moment in time on one video to the same moment in all other videos. In the following image you can see how I’m using my offsets to take the time of 609 seconds in video 4 and find where that same time is in the others.

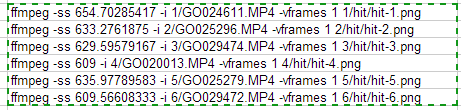

At this point it’s a matter of feeding into ffmpeg to extract a frame (for a static image) or several frames (for a video).

Run those six commands and you’ll have six images from the same point in time and space ready to start stitching.

Rotation. This one is a bit tricky and I tried coming up with some super techy solutions to solve it. I wrote a rudimentary computer vision application that would try to extract the rotation data from a video. The intent was to maybe play it back in reverse on the controls of the viewer to try to counteract the rotation. In practice it didn’t work so well. It wasn’t extremely accurate and it suffered from a lot of drift. It also wasn’t very smooth. The smoothness was mainly my fault. I wasn’t interpolating between counteractions. If anyone is interested in seeing the viewer with counteraction shoot me a message and I’ll see if I still have it.

Nonetheless, the work did occur and I do have something to show for it. Here’s a video with a line being drawn indicating the software’s guess of the direction and exaggerated magnitude of the rotation.

Direct YouTube link in case the embed doesn’t work

In order to fully explain how I finally managed to remove the effects of rotation we must first dive into the stitching process.

Stitching

At this point we’ve got enough data that we can dump frames. Let’s just concentrate on one frame for the time being. (One frame from each of the six cameras, that is.)

They look like this (Image link is to a gallery):

We need to get those images stitched into one larger image. To do so, we’ll use a tool called Hugin and several tools from the Hugin installation to help automate parts of it. Hugin is an open source utility that makes use of several panorama creation tools.

When the images are loaded into Hugin it will ask for some information about the lenses used. These cameras use a full frame fisheye lens with a hfov of 170 degrees. 170 degrees horizontally only gives us about 127.5 degrees vertically, which isn’t a whole lot of overlap. To try to maximize overlap I rotated each camera 90 degrees off from its neighbor in the payload box. The more overlap the better as fisheye lenses (especially cheap ones) tend to lose a lot due to blurring and chromatic aberration at the edges.

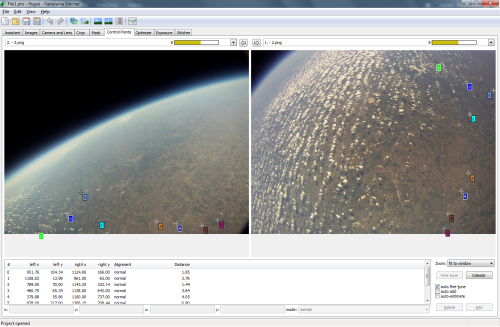

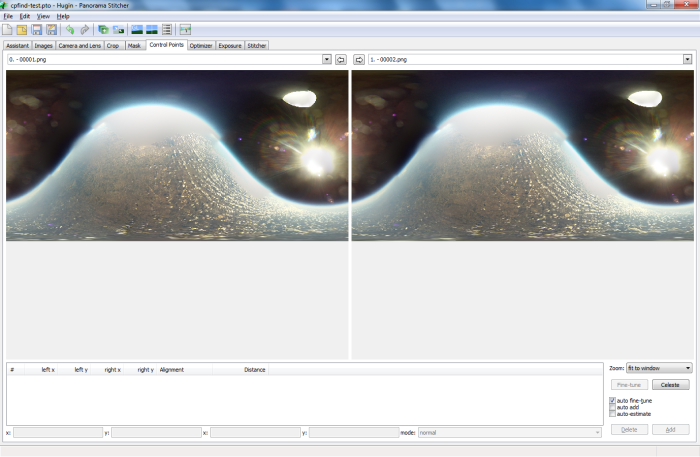

Next we need to find common points among images that overlap. Attempting to do so with the automatic control point finder tends to not have very good results. With my data set it usually doesn’t find any control points. (Hugin devs out there, does the control point finder remap to remove distortion before attempting to find control points if it knows the fov and lens types? If not, I think it might be useful, even if it is mostly naive.)

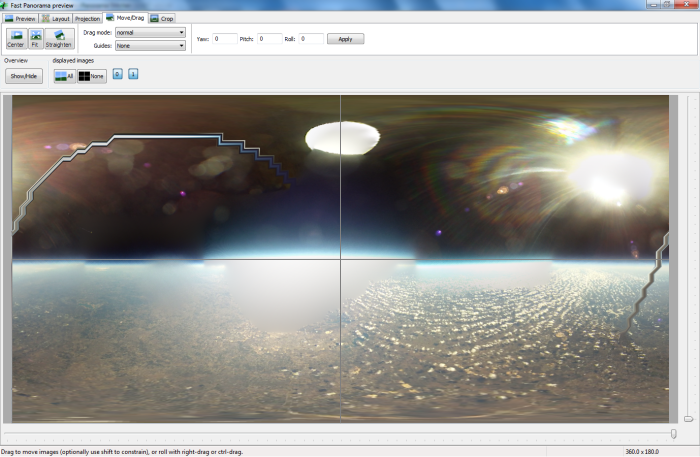

Here’s a screenshot of manual control point placement across two images.

This process contains a biiiiit of tedium.

Once we have all of the images (or as many as we can get) connect with control points we can ask the program to optimize some parameters. We have a spherical image when all images are accounted for. Hugin’s tools will look at how the control points are related to each other and try to find a transformation that fits them to a surface in which they all make sense. It will return a deviation value that we can use to determine how well it did at aligning our images. There’s a lot to this process and I won’t get into it all. It involves placing control points, fine tuning control points, optimizing the image, looking for major stitch issues, checking control point distances, removing bad control points, optimizing some more. Rinse and repeat until it starts to come out good. As I hinted at with the overlap stuff above, we’re not dealing with the most optimal of source data here.

There are some other factors that come into play with panorama stitching. You want to avoid placing control points on objects that move between images taken. Typical panoramas are done with one camera being rotated after each photo is taken. Objects that move between photos taken that have control points on them will severely hinder the stitching process.

You also want to avoid placing control points on objects at largely different distances from the lens. Don’t mix control points between objects a couple feet away and a hundred feet away as the optimizer won’t be able to make them fit to one transform. I’m able to mostly ignore this issue at very high altitudes as everything is so far away that the difference between the closer and the further objects is so slight that it doesn’t do much to negatively impact the optimization. Of course, that means I don’t allow any control points to be placed on anything attached to the camera payload, including the parachute and the balloon.

In order to place control points you have to have common features. That’s why you need the overlap between images. The more overlap the better the result. In the sample images above we have one image, the fourth, that isn’t able to be connected to any of the others. And it’s not because there’s no overlap. There are three issues with the fourth image that presents us from finding control points in it.

First, it’s just starting to rotate from the black of space into looking at a bit of the ground. That means it was brightening the image to compensate for the lack of anything to look at and it’s over exposed the ground. The detail has been lost due to this.

Second, it’s looking mostly at black space and the balloon. Since we can’t place control points on the balloon and there are no features in the black space to find commonality with in other images we can’t actually place any control points.

Third, it’s the sun coming in at a very shallow angle causing it have some really bad lens flare and highlighting imperfections in and dirt on the lens. If there was anything to look at past the lens flare it would be masked rather badly by the glare.

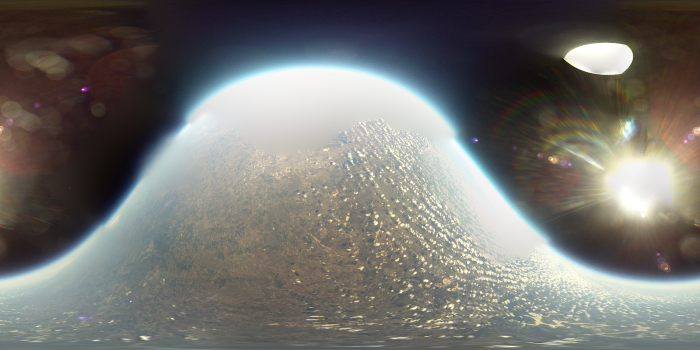

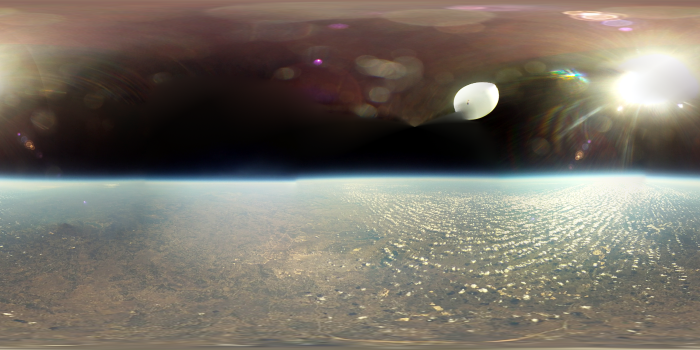

So, all that together means we can’t use this image for this panorama. After creating the panorama we have the following image to look at (I cheated a bit for this one and tried to correct the over exposure in the fifth image and I also manually leveled the horizon):

How can we fix that hole? Well, we’ve already established that the cameras are rotating. At this altitude another few seconds won’t make much difference in how the scene looks below, so what we can do is wait a few ticks for the cameras that didn’t have good imagery to come to an angle that allows them to see something that we can stitch from. Namely, the ground. Then what we can do is merge the two Hugin project files and have good controls points all around for this altitude and probably a good range of the high altitude imagery.

I did just that. Several times, actually. I was trying to get the best control point data that I could as, as you’ll recall, the goal of this project isn’t just static imagery. Without further ado, I give you the best single frame I was able to create from an altitude of 96 thousand feet. Definitely click this one.

Rotation Removal

By this point we know how to create panoramic images, we even have a control point and optimization parameters template. Next involves hundreds of hours of creating hundreds of frames of stitched images. One pano frame comes from six camera images. After hundreds of gigabytes and hundreds of CPU hours I finally have 4437 frames stitched from 26,622 source images. Finally, we can make a video. But, there’s still one major problem. That’s right, the rotation.

Here’s what that video looks like:

Direct YouTube link in case the embed doesn’t work

For an idea of how poorly behaved that is in the panorama viewer, see the following link:

So, we still have to correct it. Remember before I said I manually straightened the horizon for the static shots? Well, I can’t exactly do that for all 4437 images. Rather, I guess I could but I certainly don’t want to. Not only would I have to straighten each one to the best of my ability but I’d have to make sure it’s pointed in the right direction so as to be made into a video properly without the center point jumping all over. What to do?

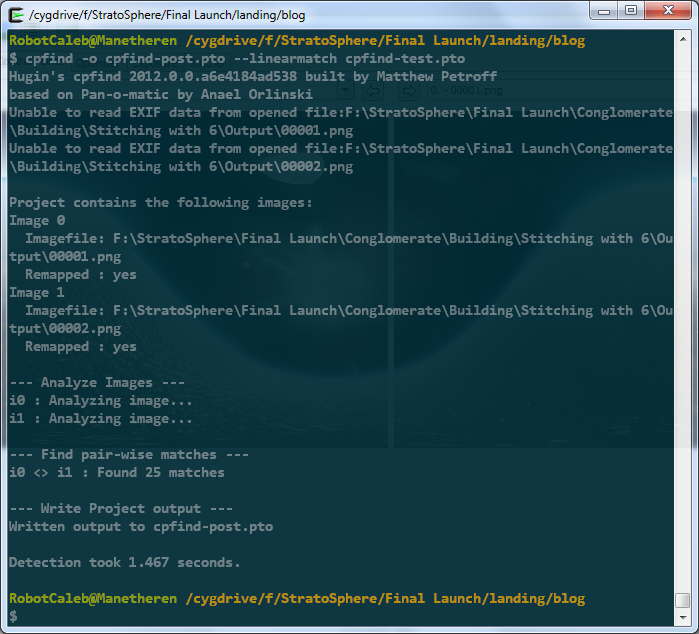

Well, included in the Hugin panorama tools is a program called cpfind. This program didn’t work very well when we asked it to find control points between the six images of one frame. But what if you compared two sequential stitched images? I bet we’d get a lot better results as they’re mostly the same image. Let’s try.

First, we create and save a Hugin project, cpfind-test.pto, containing two sequential stitched images.

Then we run cpfind against it with the

--linearmatch

parameter, which tells it to do linear image matching. That is, only match each image in the project against its immediate successor.

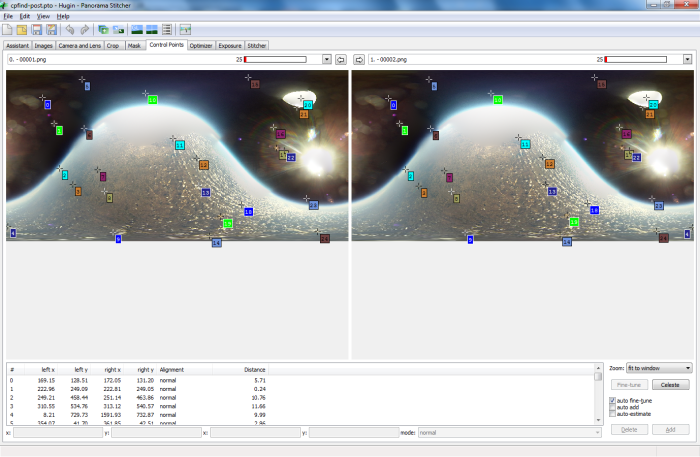

Let’s open the resulting project in Hugin and look at the control points.

Okay, that looks pretty good. It did find some points on the balloon itself, and on the lens and dust flares, but that’s okay. For this round we don’t care. We just want a good representation of what it would take to align one image to another. Since they’re so close together in time (and space!), about 33 milliseconds apart, we should be able to align them so that they’re almost identical.

We run another optimize pass, this time using position, incremental from anchor as what to optimize. When we look in the GL viewer and toggle back and forth between the two images we see that they have, in fact, been lined up quite nicely. This would be difficult to show in screenshots, so you’ll have to trust me. Also, we could get them to line up a bit better if we fine-tune all control points through the menu, but that’s not super important for this step.

Now that we have a good idea of how the images are related to each other, we can manually correct the horizon and it will cascade to the rest of the images. (In this case, to the second and only other image)

All that’s left is to have it save out the remapped images and we’ll have two images that are mostly pointing in the same direction and with a mostly straight horizon. Now all that’s left is to create a Hugin project file containing all of the images we want to make a video from and do the same process. It’s best to do as much of this outside of Hugin as you can as it starts to get real slow when dealing with hundreds/thousands of images.

Do note that this won’t fully remove our wavy horizon issue as all it really is is a form of dead reckoning. That is, the position of any one image is only guessed at by using the known position of the previous image. Which itself was guessed at. The errors accumulate the further down the guessing chain you go. But, for my data set, it was enough to get me close enough to run the next step. Before we go there, though, here’s an example of what the output looks at at this stage.

Okay, so we still have a bit of a wavy horizon. But, not to worry, we can do something I thought was a bit clever. Let’s take all of the new images we created, that mostly corrected images, and put them in a new Hugin project. Then, let’s run cpfind against them, again asking to only compare one image to the next, not to all. Now, we can’t just leave it at that as it’s still finding control points on the lens flare and on the balloon. These control points will mess with the alignment in a major way and prevent us from ever getting a clean horizon.

So, let’s write some Python. You Python writers out there, please forgive me. This is probably my second Python script ever and I’m sure I did everything wrong. At least it works.

cleancp.py

What this script does is look at the control points defined in a Hugin project file and remove every control point that exists in the upper half of the image. With a little bit of thought the reason should be clear. Just in case, I’ll explain. We are up so high that there is nothing above us but the balloon and the sun. All of the interesting (read, control point material) stuff is below us. We aren’t up so high that the horizon is too far off from the center of the image. So, if we were to remove all found control points above the middle line we should be left with control points on valid, and more importantly, stationary, imagery. Another quick pass (overnight) of the optimizer against the full data set and we have a project file that we can go in and manually line up the horizon on. This will cascade down the chain and every image should come out nicely straightened.

My first test resulted in a file I called ‘sexyhorizon’ because it worked so well. Here it is:

I have one last stitched video to show before I jump into some miscellaneous stuff. This is a video of the apex of the flight. Taken at 96,382.88 feet this video shows the balloon reach its largest diameter, than burst. The first link is in realtime, the second is at half realtime.

Miscellaneous

It’s been a long road. This project has been two years in the making. It has involved thousands of dollars, hundreds of hours spent learning (stitching, python, electronics design, embedded programming, social interacting, ffmpeg incantations), amateur radio exam cramming, bleeding, social interacting, and many other things. I definitely stepped out of my comfort zone and tried new things and learned a ton.

I’m about 99% satisfied with the results and have a ton more footage that I can process if I find the desire.

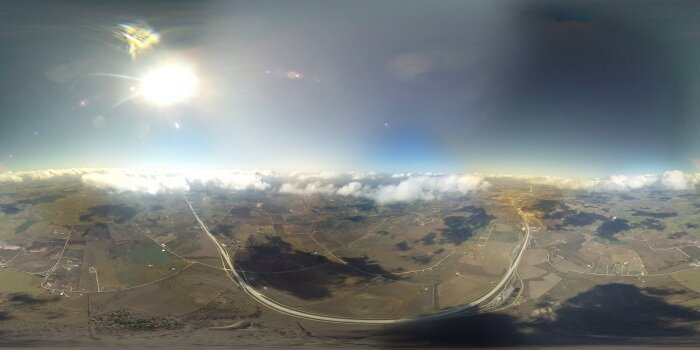

Some more goodies below. First, the balloon at about 1.4 miles above the ground, or 7.5k feet.

Next, about 2k feet lower, at 1 mile up.

Then, about 12 seconds before impact with the ground, at about 300 feet.

Okay, another video. This one is all six cameras as they saw the end of the descent and subsequent impact with the ground. (If you watch the bottom middle camera when it comes to a rest on the ground you can see how it adjusts the exposure to bring out the detail in the dark scene.)

Direct YouTube link in case the embed doesn’t work

And, lastly, here’s a video showing the balloon burst as seen by all three upward facing cameras.

Direct YouTube link in case the embed doesn’t work

Acknowledgements

This project was mostly me, but there are some people I do want to thank publicly.

My wife, Jennifer, and my son, Perrin. My time is your time, now. (Until the next project comes along!)

My brother, Jordan. Thanks for your early payload brainstorming, and your REI yardsale voodoo that acquired two of the cameras. Also, thanks for retrieving the first balloon from that tree.

John, a longtime friend. Thank you for helping this project reach its goal. Without your help this project might have not been finished anytime near when it was.

Team Prometheus’ Monroe and Stewart. Thanks for your assistance with the last two launches, and for supplying the hydrogen.

Steve, Ben, Ryan. Thanks for helping out.

To everyone else involved, thanks.

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth | MoreDailyFeeds

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth « VidenOmkring

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth « シ最愛遲到.!

Pingback: Tech-Critics: Watch a Balloon Explode 100,000 Feet Above the Earth

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth | Social Network Background Check

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth | Computer Repair Gainesville

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth | 1v8 NET

Pingback: Così esplode un palloncino a 3000 metri sopra la Terra

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth | Real True News

Pingback: Watch A Balloon Explode 100,000 Feet Above The Earth | Gizmodo Australia

Pingback: WATCH Balloon exploding at 100,000 feet in 'Operation Stratosphere' - 4unews - News for you | 4unews - News for you

Pingback: Watch a Balloon Explode 100,000 Feet Above the Earth « Robot Insurance

Pingback: r6criações | sites e sistemas

Pingback: Operation StratoSphere